It's true, LLMs are better than people – at creating convincing misinformation

Tan KW

Publish date: Wed, 31 Jan 2024, 07:40 AM

Computer scientists have found that misinformation generated by large language models (LLMs) is more difficult to detect than artisanal false claims hand-crafted by humans.

Researchers Canyu Chen, a doctoral student at Illinois Institute of Technology, and Kai Shu, assistant professor in its Department of Computer Science, set out to examine whether LLM-generated misinformation can cause more harm than the human-generated variety of infospam.

In a paper titled, "Can LLM-Generated Information Be Detected," they focus on the challenge of detecting misinformation - content with deliberate or unintentional factual errors - computationally. The paper has been accepted for the International Conference on Learning Representations later this year.

This is not just an academic exercise. LLMs are already actively flooding the online ecosystem with dubious content. NewsGuard, a misinformation analytics firm, says that so far it has "identified 676 AI-generated news and information sites operating with little to no human oversight, and is tracking false narratives produced by artificial intelligence tools."

The misinformation in the study comes from prompting ChatGPT and other open-source LLMs, including Llama and Vicuna, to create content based on human-generated misinformation datasets, such as Politifact, Gossipcop and CoAID.

Eight LLM detectors (ChatGPT-3.5, GPT-4, Llama2-7B, and Llama2-13B, using two different modes) were then asked to evaluate the human and machine-authored samples.

These samples have the same semantic details - the same meaning but in differing styles and varied tone and wording - due to differences in authorship and the prompts given to LLMs generating the content.

https://www.theregister.com//2024/01/30/llms_misinformation_human/

More articles on Future Tech

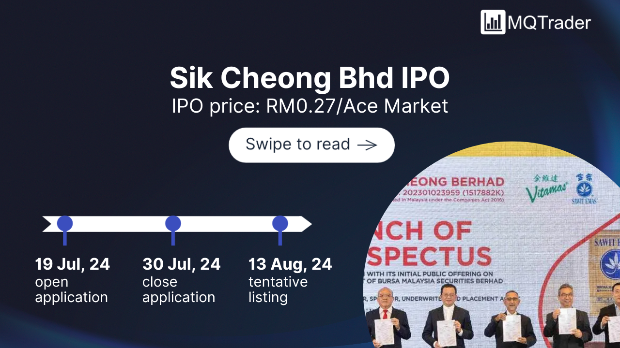

Created by Tan KW | Jul 30, 2024

Created by Tan KW | Jul 30, 2024

Created by Tan KW | Jul 30, 2024

Created by Tan KW | Jul 30, 2024

Created by Tan KW | Jul 30, 2024

Created by Tan KW | Jul 30, 2024